Leeham News and Analysis

There's more to real news than a news release.

Bjorn’s Corner: Aircraft engines in operation, Part 3

February 3, 2017, ©. Leeham Co: In the last Corner, we went through how our airliner engine reacts to the different phases of flight, including what happens when we operate in a hot environment.

We also showed how engine manufacturers make a series of engines with different thrust ratings by de-rating the strongest version through the engine control computer.

We will now look deeper at how engines are controlled and why so-called flat-rating is important.

Engine control

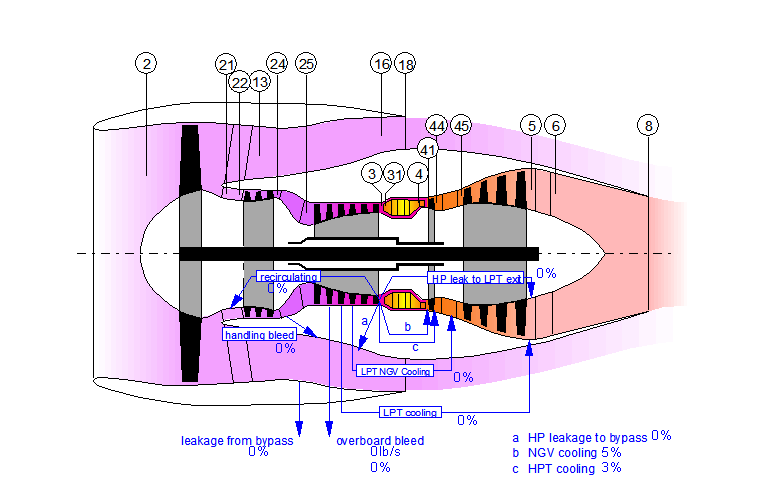

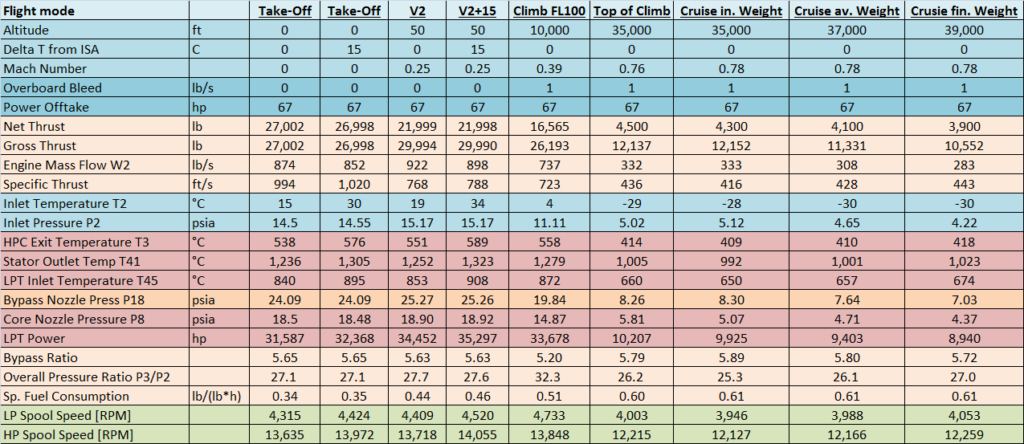

In Figure 2, we have added the inlet air temperature and pressure (T2, P2) and exhaust nozzle pressures P18 and P8 to our table (Figure 1 shows where these are measured). With this addition, we have the key parameters the engine manufacturer use to control the engine.

The thrust of the engine is controlled by how much fuel is injected in the combustor. This controls the temperature of the gas that enters the turbines. The energy the turbines extract from the gas is proportional to the Temperature Entering the Turbines (TET). It’s called Stator Outlet Temp, T41 in our table.

Thrust control

An airliner cannot function with an engine that delivers a varying thrust. On a twin engine aircraft, this can mean different thrust levels on the two sides of the aircraft, which could cause dangerous situations.

The pilot will be able to set a thrust level and get the same thrust on both sides. This will be the case with a new engine (or fresh from overhaul) paired with one that is short of overhaul.

The engine’s control computer, the FADEC, controls the thrust level for GE and CFM engines via fan RPM and for Pratt & Whitney/Rolls-Royce engines via the Engine Pressure Ratio (EPR). The EPR is measured between inlet (P2, Figure 1) and exhaust nozzles (P8 for Pratt & Whitney, P18 and P8 for Rolls-Royce).

At the same time, as the FADEC controls the set thrust, it also makes sure that temperatures, pressures and RPMs do not go over allowed maximum values. Temperatures and pressures in the different parts of the engine are controlled so that material limits are not exceeded.

The same goes for RPM. The loads on the rotors holding the compressor and turbine blades goes up with increasing RPM. The certification documents for an engine therefore contains maximum RPMs for the engine’s low and high pressure spools.

Flat rating

If the air that enters the engine (T2) is colder than normal, the air temperature which enters the combustor will be lower. This means more fuel can be injected in the combustor before the maximum allowed TET is reached, creating a gas with higher energy. The engine can then develop more power over the turbines, which enables more thrust.

Structures for holding the engines depends on the maximum loads the engines can exert on the airframe. To avoid dimensioning the aircraft for a cold day (which is not the critical case for take-off), the engines are flat-rated to a maximum thrust, Figure 3.

The FADEC will keep the engines take-off thrust constant at the agreed “Flat rated take-off thrust” up to an ambient temperature called the “Kink point.” This is normally 15°C over ISA, i.e. 30°C at sea level. The FADEC controls the thrust level by reducing the fuel supply to the combustor under the Kink point. The result is the turbines will run cooler (T41 showing the effect on the TET).

As we saw in Figure 2, the different phases of flight put different stress levels on the engine. An engine that would run at take-off stress levels all the time would not last long.

In the next Corner, we will look at how the FADEC brokers the aircraft’s need for thrust for different flight phases against engine longevity.

Hello Bjorn,

Some questions… Hope I won’t be alone to ask some 😀

Because for sure i’m not alone to read these articles !

I’ve heard of a Fan pressure ratio, is it P2/P18 ? what is the impact of this ratio on engines ? Looking forward with variable pitch fan a the corner (of the decade), this pressure ratio will be a now “variable” to play with !

Can we sort current engine using the Fan Pressure ratio ?

On the airframe side, what is the impact of a temperature increase on the lift ?

Bonne journée !

Fan Pressure Ratio (FPR) is not P18/P2, it’s P13/P2. There is a slight difference as P18 is at the exit of the bypass nozzle and it’s shaped to give the correct exit speed of the bypass air compared to the core exit speed.

FPR is coupled to specific thrust and BPR. For the medium BPR CFM56 it’s around 1.7 for the bypass channel, a bit less for the core channel. For a BPR 10 engine it’s more like 1.4-1.5. You could sort engines on FPR but even better on specific thrust.

Hot temperature will lower air density and therefore reduce lift.

Thanks

Then hot air is double pain … need more speed / thrust and you have less ! that’s it ?

FPR of Leap vs Purepower should also be different i guess ?

Is there a reliability, accuracy, cost or other benefit to the different methods utilised in FADECs of different manufacturers to control thrust? And does engine philosophy/architecture have a bearing?

There is. I suspect he will go into more detail in the next corner.

You mean set thrust based on N1 RPM or EPR. It’s history and philosophy I think. N1 does not cover deterioration of the engine directly which EPR does but Pilots like N1 better. It’s simpler to understand. The best method is used on the A350, you don’t care what the engine OEM does, the ECAM display shows percent engine power and the rest is calculated by the computers driving the display and throttles. End of N1 vs. EPR discussion.

How the percent engine power is calculate then ? with more than the “classical” EPR / RPM parameters ?

Interesting that the EPR seems to be favored by pilots !

Thanks Bjorn & Pegasus.

Obvisouly leads to two other questions. Why did it take until the A350 to combine the best aspects of both, as far as the cockpit UI is concerned? (Presumably can’t be inadequate computing power onboard on nay design for many years, or on sensor fidelity or ability to develop suitable algorithms). And is the A350 (at least very significantly) universally considered the best/’end of” or is this from your personal perspective Bjorn?

It was actually the A380 that started the unified percent UI for throttles. Why it took so long I don’t know, guess the EPR camp fought for their more correct thrust setting display (N1 is not 100% correlated to thrust) but finally realized that Pilots preferred the GE/CFM method. Then Airbus solved it once for all.

I have talked to perhaps 50 airline pilots re EPR vs N1 and haven’t found one who preferred EPR so it’s not my opinion. But it’s a technically more correct way to measure thrust. Re if A380/A350 is universally liked? I can’t say, but those pilots I have met (more than 10) like it, it’s simple and logical.

Thanks Bjorn. Sounds to me like engineers had too much say at P&W/RR. And similar to the whole issue of whether a control coulumn/stick etc. should offer a visual position clue to status (actually repeated on roads with eg electric parking brakes that give no visual clue whether they are on or off when the ignition is off).

There is an alternate mode (for PWA N1 mode) that let you rev to redline rpm even in Cold climate conditions and have the higher thrust. N1 mode is seldom used on PWA Engines.

Hello Claes

Don’t know the roots of PWA choices, but I recall some case where EPR measurement was misleading (pre-fadec time -> 737 in the potomac for instance)

Can a RPM bases approach be fooled by icing or other facts ?

This article has incite into that crash:

http://theflyingengineer.com/flightdeck/cockpit-design-epr-vs-n1-indication/

EPR is more vulnerable to icing but I think Boeing have probably learned from and overcome those earlier defects.

EPR still relies on 2 sensors fore and aft of the engine afaik. EPR is more reliable when dealing with foreign objects like bird strikes however and is considered a more accurate measurement. There are variants such as IEPR.

With climate changes , i.e. is temperature at altitude going to begin to affect engine operations?

We are talking about fractions of degrees which will have a large influence on the climate but not really on the physics of flight. But the winds that can be the result of a changed climate can, more than any change in temperature vs an ISA atmosphere.

Same fundamental as Bjorn said here, higher temperature=less air density=less thrust. Whether a couple of degrees at surface will change much at FL300-400 I doubt. Incidentally, in both the Summers of 2015 and 2016, flying to Anchorage I encountered temperatures in Arctic Canada at 78-79°N where our TAT was +5-8°C (at ISA temps normally in the range -25 to -30°C). This meant we had issues climbing due less thrust as temperatures rose significantly above the tropopause level. You may see these kinda at temperatures at cruise levels around the equator, but certainley do not expect them close to 80°N!

I am highly interested in this series presented by Bjorn. I have read (studied) every one. The presentation and responses to this one focus on steady state conditions. Is there any threat of engine damage (from over-heating) during ramp up to steady state? How is the fuel ignited initially? Is ignition required constantly? Are there any issues with imbalance due to residue from products of combustion?

Maybe these questions are too far off the topic for which I apologize.

Hi Norm,

There is definite dangers of damage caused by transients, mostly caused by the pilot moving the throttle levers fast. FADEC’s have diminished this drastically. When I started flying jets the engine control was still hydro-mechanical and it did not mask quick throttle movements to the engine. You learned to move the throttle sloowly. I still do for all engines (also piston) but the pilots who have learned to fly jets with FADECs move the throttle much faster (almost slams it). The FADEC is 100% in control (the hydro-mechanical was not) and makes sure the engine spools up or down at a safe pace.

There is a spark plug in the combustor which ignites the air/fuel mix at engine start and you can put it on during flight. Several engines have a standard operational procedure to have the spark on during e.g. engine icing conditions.

We will start to treat residues in coming corners when we come to time on wing and what makes you pull an engine for maintenance. I would say imbalance is not so common but residue contamination and especially erosion is common reasons for taking an engine off the wing.

Note: this writer is a different Bjorn…(Editor)

Hi Bjorn and many thanks for your very interesting articles.

I think many airlines try to increase climb speed earlier to save fuel. How will an increased climb speed generally affect the stress on the engines?

Lets say that we accelerate a heavy A321 to 315 kt at 4000 ft (with ATC approval) and then end up at TOC at M 0,78 (same as cruise speed).

Hi Bjorn,

increasing climb thrust will raise the temperatures in the engine. This increases the climb speed (ft/min). As long as you are within maximum climb thrust you are OK. If you always climb at max climb thrust you will affect time on wing for the engine. Increasing climb speed by flying at a higher speed point is not the same thing, you climb at a shallower angle which is further from best lift/drag ratio. But an airliner has things that cost per minute (crew. hourly maintenance charges, utilization effects) so in the whole a faster climb, i.e. one that takes a shorter time can be advantageous. The FMS has all the parameters to do this trade and you regulate your priorities with the cost index.