Leeham News and Analysis

There's more to real news than a news release.

Bjorn’s Corner: Analysing the Lion Air 737 MAX crash, Part 1.

November 1, 2019, ©. Leeham News: We start the series on analyzing the Lion Air 737 MAX crash by looking at what went wrong in the aircraft. It’s important to understand MCAS is not part of what went wrong. It worked as designed during all seven Lion Air flights we will analyze in this series.

It was a single sensor giving a faulty value that was wrong with these aircraft. How a single faulty sensor could get MCAS to doom the JT610 flight (called LNI610 in the report) is something we look into later in the series. Now we focus on why the sensor came to give a faulty value for five out of seven Lion Air flights and how these flights could be exposed to two different sensor faults.

What went wrong in the Lion Air flights?

The final crash report of Lion Air LNI610 gives us the details so we can now conclude what went wrong during the LNI610 flight but also for four out of six flights before LNI610.

We know the culprit for the LNI610 flight and the previous flight LNI043 was a faulty Angle of Attack (AoA) sensor. But there was trouble on flights before these and what was the exact fault on flights LNI043 and LNI610?

The final report gives enough details that the probable fault cause for all flights can be deduced. There was an intermittently faulty AoA sensor during five flights before LNI043, the flight before LNI610 which had the AoA sensor replaced with a misaligned sensor from the Lion Air spares replacement pool.

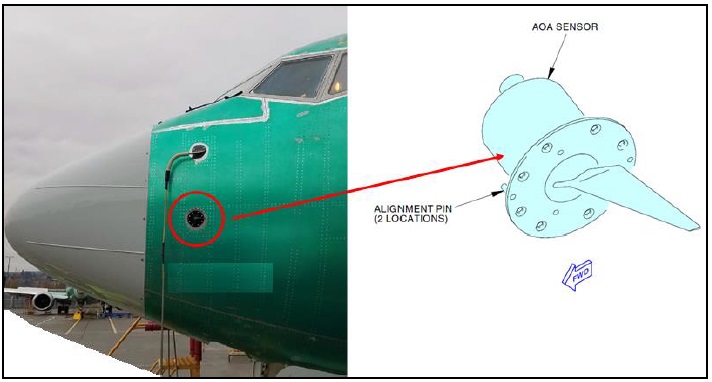

The AoA sensor is of a common type from Rosemount Aerospace (Figure 1), today part of Collins Aerospace. The sensor has a wing-like vane which follows the local airflow. It’s coupled to two resolvers over its rotatable mounting drum. Resolvers are well known and reliable transducers transmitting their angle information over electrical cables to avionics boxes.

One resolver (No 2) sent its angle signal to the Air Data Inertial Reference Unit (ADIRU) which was forwarded with other data to the Flight Control Computer (FCC). The other resolver (No 1) sent the angle signal to the Stall Management and Yaw Damper unit (SMYD).

During three of the five flights before LNI043, the crews complained about speed and altitude flags appearing on the Captain’s instruments, declaring the data was not valid. Before the LNI043 flight, the AoA sensor was replaced with a refurbished unit from Lion Air’s spare parts pool.

The replaced unit was subsequently examined for faults and an intermittent open circuit was found in the resolver feeding the ADIRU/FCC. During flights, the AoA signal to the ADIRU/FCC could disappear, then later in the flight or when tested on the ground be OK again.

This fault, complete disappearance of the left AoA signal did not trigger MCAS. The absence of a valid AoA signal to the ADIRU meant the speed and altitude data measured with the aircraft’s pitot sensors could not be corrected for AoA, therefore, the speed and altitude data was flagged inoperational on the left side when the AoA signal was absent.

When there was no AoA signal sent to the FCC from the ADIRU, MCAS was not triggered as it does not work without a valid AoA signal and when the signal was present it showed normal AoA, hence MCAS was not called for.

The intermittent functioning AoA vane was finally giving clear AoA fault indications in the Onboard Maintenance Function (OMF), a maintenance information concentrator introduced for the 737 MAX. The 737 MAX Aircraft Maintenance Manual (AMF), which had guided all maintenance actions around the faulty sensors, said the sensor should be changed.

The aircraft was grounded waiting for a replacement AoA sensor. After two days the replacement AoA sensor was put on the aircraft and the faulty sensor was sent to the replacement loop where it should be checked and repaired. The investigation had no problem with Lion Air’s maintenance actions up until the installation of the replacement sensor.

Replacement Angle of Attack vane with a bias

The investigators conclude the replacement sensor was functioning correctly electrically but had a +21° bias fault. They conclude this from the traces registered on the aircraft’s Flight Data Recorder which shows how the sensor follows the right sensor with a +21° difference at all times when the systems are on.

This resulted in an immediate stall warning when the LNI043 MAX rotated as the landing gear switch said the aircraft was flying (no systems rely on AoA information at a slower speed than lift-off speed as the sensors are unreliable in their angle information before there is fast enough airflow past them) and both the SMYD and ADIRU are now fed with a signal which is 21° to high.

A 737 MAX stall is at around 20° AoA with slats and flaps deployed and the left AoA vane was now feeding the SMYD and ADIRU with about 30° AoA (21° plus the actual angle of attack). When the flaps were retracted MCAS activated and we know the result. We will analyze this phase in detail in later Corners.

Now we look at what the investigation concludes about how an AoA vane with a +21° faulty bias (it should be 0°) could pass into the Lion Air pool of replacement spare parts and how it could be fitted on the aircraft without the mechanic discovering it gave the wrong values.

The unit was refurbished to a serviceable standard from a replaced unit on another 737 by a licensed maintenance shop in Florida. The investigation found the shop used a verification procedure for the correct functioning of the unit which could result in a 21° faulty bias.

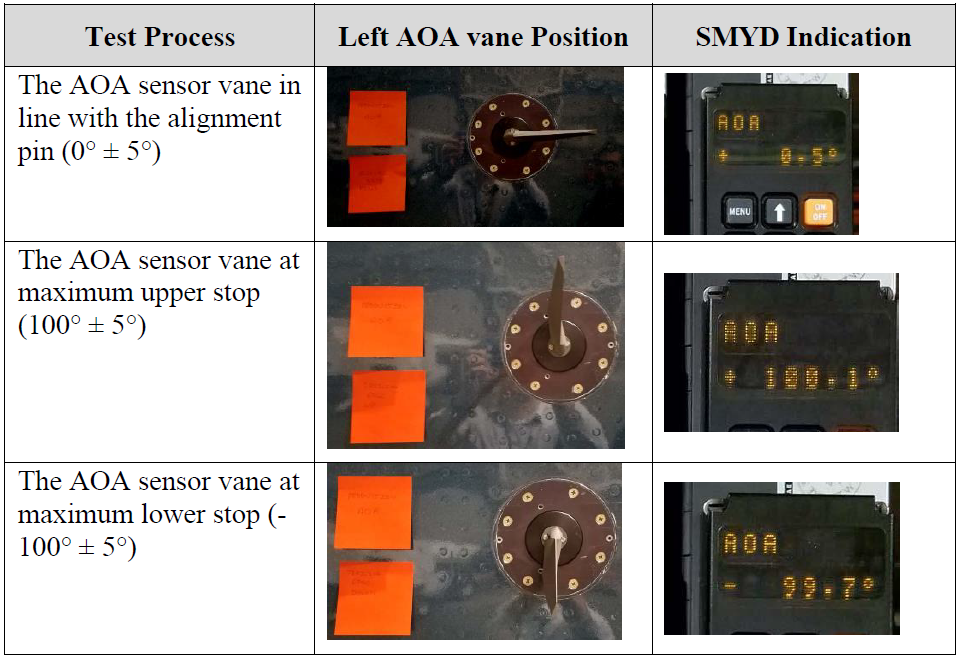

According to the maintenance procedures for the MAX, this should have been discovered at the installation and verification of the unit on the Lion Air MAX. The mechanic can use a procedure that required no special tools, where he installs the unit, then let it be held in a fixed position and goes and read the angle value on the front of the SMYD unit in the Avionics bay below the cockpit, Figure 2.

Figure 2. The test of the vane positions with the reading of the transmitted AoA on the SMYD unit. Source: JT610 final report.

The mechanic has not been able to prove he did this check. His presented photo evidence of such a check has been proven as false according to the investigation. A presented cockpit photo of the pilot’s displays was from before the sensor change and a photo of the SMYD with a correct angle was proven as coming from another aircraft.

The investigation concludes the non-correct maintenance procedure by Lion Air’s mechanic let an incorrectly aligned AoA make its way onto the aircraft for the LNI043 flight.

To make the sensor story complete, the assumption of what happened with the AoA sensor of Ethiopian Airlines ET302 is the vane was ripped off by a bird collision at lift-off. The behavior of the sensor after lift-off is consistent with a sensor with the vane missing. It tumbles to a value above +70° which was the case for ET302.

This Corner has been about what happened to the AoA sensor in LNI043 and LNI610. We have also put forward the present thinking about what happened with the ET302 sensor.

In the next Corners, we will look into how such faults can be allowed to bring down a modern airliner.

Was there any safety assessment done on this critical component failure?

From the KNKT final report

===================

14 FAR 25.671 (c) states that “probable malfunctions must have only minor effects

on control system operation and must be capable of being readily counteracted by

the pilot”. This includes any single failure, such as the AOA sensor malfunction.

========================================================

Boeing

considered that the loss of one AOA and erroneous AOA as two independent events

with distinct probabilities. The combined failure event probability was assessed as

beyond extremely improbable, hence complying with the safety requirements for

the Air Data System. However, the design of MCAS relying on input from a single

AOA sensor, made this Flight Control System susceptible to a single failure of

AOA malfunction.

================================

Boeing indicated to investigators that the failure

modes that could lead to uncommanded MCAS activation (such as an erroneous

high AOA input to the MCAS, as occurred in the Lion Air accident) was not

simulated as part of these functional hazard assessment validation tests.

=========================

Erroneous AOA signals are not frequent events. Boeing reported that 25 activations

of stick shaker mostly due to AOA failures occurred in 737 aircraft for the past 17

years during more than 240 million flight hours. The MCAS architecture with

redundant AOA inputs for MCAS could have been considered but was not required

based on the FHA classification of Major.

==================

” It’s important to understand MCAS is not part of what went wrong. It worked as designed during all seven Lion Air flights we will analyze in this series.”

MCAS was wholly dependent upon one AOA sensor in it’s design. It had unlimited power over the stabilizer. It was designed to fire for a few seconds and then hide for a few seconds, confusing the pilots. Had it fired and not hidden, pilots would think of a runaway stabilizer, but, since it hid every few seconds, that wouldn’t occur to them right away, if at all. MCAS is a bad design, and that’s why Boeing is fixing MCAS, not trying to fix AOA sensors. AOA sesnors are in the 737-NG and it’s flow years without crashing. MCAS is not. The original hazard analysis of MCAS was faulty. That is obvious. That is being corrected, and MCAS is now being designed to a higher reliability rating. Not the AOA sensors being redesigned. Even if you came up with a fool proof AOA sensor system, MCAS would need to be redesigned. Immediately after the Lion Air accident, Boeing fessed up to including a software program within the Speed Trim System called MCAS. Had it not, after reading the flight data recorder readings, the investigators would be very confused. How could one bad AOA sensor trigger a nose down event and crash an aircraft? They would have grounded the 737′ MAX program right there until they could figure it out. I wonder if the CEO of Boeing at the time asked his head of Engineering what an MCAS was or did he know. I’m assuming the Board of Directors found out about MCAS from news articles. AOA’s have failed in the past and will probably fail in the future. They fail at a catastrophic rate, from a hazards viewpoint. Putting them in a critical flight control loop, their failure needs to be accounted for by the system reading their data. Unless they can be built to to be much more reliable. Is there any other flight control system dependent on AOA sensors without fault control logic to account for a failed AOA sensor? The Speed Trim System obviously comes to mind. But, it has limit switches to cut out it’s inputs, MCAS deactivated those. Where is the OFF switch for MCAS?

MCAS could be turned off by the trim cutoff switch.

The pilots faced extreme adversity in not knowing about MCAS and as you said, the intermittent MCAS behavior which was not expected. Also by the numerous other system warnings that accompanied the AoA sensor malfunction.

In those circumstances, the Boeing assumption about rapid response of the pilots proved unrealistic, not just in this flight but in others. Despite this, the captain did respond to interrupt and counteract MCAS.

No one is defending MCAS, it has the flaws you mentioned and this has been admitted by Boeing. But as the Lion Air report confirms, there were several other contributing factors. They should all be explored and considered. Bjorn’s goal here is to do that, I believe.

Agreed. Clearly one good pilot dealt with it on a previous flight (jump seater)

However they also had more time as it was intermittent.

But two previous flight the pilots flying (4) did not assess it or write it up correctly. So we have 8 pilots who did not get it.

While it says a lot about pilots capability, you also do not design system that pout pilots into that position.

With all that was going on you have to be very good to focus in on the real issue when there is all the rest occurring.

I would guess that is the top 20% spot on fast, maybe 40-50% would get it eventually.

It would be interesting to blind test this and see how long it took all pilots in Lion or Ethiopian even knowing what they knowing now.

Clearly Ethiopian pilots had some idea and still did not get it and did not have the crew coordination to deal with it.

@ TW, – did you Get this one correct. The first flights, except the two with the ‘wrongly calibrated’ AOA sensor, had one that had an intermittent fault; – it either worked fine, or it didn’t work at all. In the latter case it was no AOA signal from the sensor, – then MCAS was silent. However, other PFD data, such as air speed and altitude (comparisons) became erroneous, and alerts were given, and no stick- shaker ‘actions’.

Then at the flight previous to the accident one, the AOA sensor was was replaced with one ‘that worked’, but was offset with 21 degrees. So alle mentioned flights had an erroneous AOA sensor, but only the last two had one that detected stall and started the stick-shaker and MCAS.

Nitty-gritty, you say? Oh no, it should be correct!

I once suggested, I believe it was to Peter Lemme, that we ( actually, someone that does this type of things) let a larger pilot group go through a closely monitored simulator test (of various aspects of flying scenarios). The pilot selection should reflect the ‘world-wide’ pilot experience – with other words, not be biased. I would guess that NASA’s large Human Factors group are both capable and ‘trustworthy’. Or is this still a no-no type of thing?

“The mechanic has not been able to prove he did this check. His presented photo evidence of such a check has been proven as false according to the investigation.”

The exact wording used in the report is “not valid evidence” instead of “proven as false”.

I think it is safe to say the engineer in Denpasar did not document with photos his check, likely because he skipped it. Not that he falsified evidence, which is a whole degree of misleading the investigation worse.

Allegedly photos were provided which have been proven to have been taken on another date.

Exactly, but being made in another date* does not constitute false evidence. False evidence, is very loaded wording, would be evidence which would have been specifically manipulated to misrepresent reality. Which is different of evidence which, while faithful to reality, reflects a part which is irrelevant to the investigation purpose, ie. establishing if the alpha vane was checked or not according to procedures.

The report correctly concluded it was not, but circumscribes itself to the matters of fact made public. Bjorn’s wording extends the report’s conclusion towards serious interpretations which the report apparently was careful about not advancing.

The report does not attempt to establish blame, and specifically says that is not its purpose. That accounts for the choice of wording, it was just a statement of fact that the provided photos did not substantiate the claim of inspection.

Bjorn’s column is an opinion and he has concluded what a reasonable person might conclude, given the evidence. If the suspicion is that the inspection was not done, and the photos prove to be invalid for that context, but are claimed to be valid by the provider, then it could reasonably be said that this claim was false.

False, not according with truth or fact, incorrect. The evidence is undoubtedly false, but we can’t be sure why it was presented. There are several possibilities and deliberate fraud is one of them.

Yes… but then it is not “proven as false <>”. It is such according to one’s reading (and the claims regarding such evidence), despite as you put it, a reasonable one.

Which raises the question, are maintenance reports supposed to routinely attach the photos as per procedure, or do read values suffice? The report says “provided the investigation several photos” which suggest they were not readily available on file, and the engineer had to be reached out to obtain them.

*“proven as false according to the investigation”

(sorry for this, my emphasis replaced with html tag in this comment)

VDG:

That is amazing attempt at shift even by current US politician standards.

You present information from different dates and aircraft and its not false? Phew.

If it flies like a duck, swims like a duck, quacks like a duct, has a duck bill and we can now add Duck DNA, it is a duck.

Sorry but the photos are definitely proven to be false evidence.Bjorn did not preclude the possibility of an innocent explanation. I believe that the technicians are supposed to provide a photograph to verify that the job has been done correctly. We don’t why the wrong (false) photos were provided or the authorities are not releasing the reason at the moment.

TW:

The shift is only in your mind. I’ll simply refer to my first comment to clear that up for you.

I was objecting to the lack of precision in Bjorn’s statement. It is totally legitimate to have an opinion, borrowing the investigation’s authority to say something the investigation did not say is something else.

If there were real photos then no need for false (well assume the real ones did not show you blew it)

Slight of hand is a form of false hood and shows intent.

” VdG,

I see your point, but (again) keep in mind that accident reports like this is written in the spirit of IACO Annex 13 – (see bottom of the report’s page 2) – don’t blame or accuse anyone – that is the task of others.

Then we often see somewhat vague wording like we see here in accident/incident reports. I strongly recommend the IACO publication, – you find it on IACO website. – or just search for it.

It was precisely because I am aware of accident investigation mandates not serving the purpose of attributing blame, that Bjorn’s wording sounded weird, leading me to read the report further, and finding that the report remained within its confines.

“It’s important to understand MCAS is not part of what went wrong. It worked as designed during all seven Lion Air flights we will analyze in this series.”

The deadly part of MCAS was the last minute change from .6 to 2.5 change. The change they put in after the flight tests on the low speed stall characteristics, during the ‘wind up’ turn. If they had not put in those last minute changes, that weren’t documented to the FAA properly, then the pilots in the two accidents may have been able to overcome MCAS through the elevator pitch control. But, in changing MCAS to work at the higher power speed setting, the pilots were not able to overpower MCAS. This last minute change, it can be argued didn’t have appropriate oversight, failure mode testing or sign off. That change wasn’t put through the same level of testing as the original MCAS design. And it ‘adopted’ the hazard analysis of the .6 setting, without adequate review by Boeing and the FAA.

When I read this sentence I knew commenters were going to jump all over it. Bjorn is not saying here that MCAS was a good design, based on good assumptions. He is also not saying that good practices were properly adhered to during the design process. He is saying that MCAS performed exactly as it was designed. (I personally would go even further to say that MCAS performed exactly as it was certified.)

Bjorn is being methodical and precise in his approach to analyzing what happened, like an engineer should. This is the only way one can really get at everything that went wrong, and evaluate their relative contributions. Commenters should be patient as Bjorn clearly states he will get into the MCAS details in subsequent Corners.

I basically agree, Mike. Bjorn’s meaning is clear. But it’s too cute, and is technically wrong. The MCA SYSTEM malfunctioned. It’s a system, after all, and its inputs and desired effects are part of that system. I would agree that the software within the system appears to have performed exactly as designed and certified in moving the horizontal stabilizer as it did.

Hi tem,

Take a look at theNTSB System Safety and Certification Specialist’s Report which is reprinted in Appendix 6.2 of the KNKT Final Report starting on pg. 245.

http://knkt.dephub.go.id/knkt/ntsc_aviation/baru/2018%20-%20035%20-%20PK-LQP%20Final%20Report.pdf

MCAS is an open-loop control law implemented within the STS (Speed Trim System) control law (also open-loop) which reside as software on the FCC. It actually says that MCAS is function within STS.

I get what you’re saying but this all depends on precisely what the word “system” means in this context i.e. where the system boundaries are drawn. I think the way Bjorn seems to be drawing them is consistent with the NTSB’s description, but I see your point of view.

Thanks Mike but both JATR and NTSB still have their own questions about MCAS, to the point of still seeking data on the Max pitch up moment to clarify if it might be stall identification. It’s partially a system inside a system. But AoA is not an input to STS.

MCAS would either function normally, fail or malfunction. On at least 3 flights it malfunctioned. The mitigation of that did not at all work as designed, which is part of the system and certainly “went wrong.”

I agree on what Bjron is saying, I would not call it cute (maybe too techy) , but I do believe it should have some background as to why he says it.

I get it, I certainly wrote enough bad software and built enough bad circuits that did what they were supposed to, I just screwed up the logic for both (of course I tested it and found the bust and fixed it)

Bjorn,

I’m slightly confused. You seem to skip from talking about LNI043 to then talking about ET302. Was the AoA sensor not replaced between LNI043 and LNI610?

No, there was one sensor change for the seven Lion Air flights, between the flight prior to LNI043 and LNI043. The sensor behavior for LNI043 and LNI610 flights were identical, it was the same replacement sensor.

The sentence about ET302 was only provided to complement the sensor part of the discussion. For ET302 we had a sensor that from the ADIRU and SMYD point of view was functional, it delivered values like the 043 and 610 sensor. Just values which were wrong but the inputs were not programmed to invalidate the sensors based on these value streams (up to 80 to 90° is possible with such a sensor when the aircraft is for instance tail sliding).

Thanks for clearing that up for me.

Yea I struggle with not ignoring the sensors as bogus with no value you could reach (symbol ? was sued ) which was the readout for unreliable in my programs.

Where it was an issue, we wrote programing to put it to a value that was safe (for my systems, in the case of AOA ignore would be the right move)

Between those flights it was not. The replacement occurred between LNI775 and Flight LNI043. LNI775 is when faults were initially detected. LNI043 reported faults did not lead the Jakarta engineer to replace for a second time the alpha vane.

Why you temporarily stop production of Boeing 737-Max? There is always a solution for every problem on hand. Please check the steep turn condition! That could be a solution for this problem. Glad to hear that you have completed 560 test flights for Boeing 737-MAX. It is an excellent progress. But did you test 737-MAX for the extreme circumstances like steep turns and speed so low approaching stall? Because ET-302 Captain Yared Getachew had informed the air controllers in Addis Ababa Bole international airport that he faced a flight control problem and requested a clearance to get back to the airport. Since the plane was under takeoff procedure, this shows that the plane was making a steep turn to get back to the airport, and hence MCAS was activated. Please test the steep turn condition to be 100% confident on MCAS system.

On Seattle Times newspaper dated November 15, 2018 I read “Bjorn Fehrm, a former jet-fighter pilot and an aeronautical engineer who is now an analyst with Leeham.net, said the technical description of the New 737-MAX flight control system – called MCAS (Maneuvering Characteristics Augmentation System) – that Boeing released to airlines last weekend makes clear that it is designed to kick in only in extreme situations, when the plane is doing steep turns that put high stress on the airframe or when it’s flying at speeds so low it’s about to stall. ” It is because of the above statement that I raised my point.

Ethiopian Aircraft Accident Investigation Bureau preliminary report indicates that ET-302 was in an airworthy condition and the crew possessed the required qualifications to fly the plane. The takeoff procedures were normal, including both values of the AOA sensors. However, shortly after takeoff, the similarities between two crashes in Ethiopia and Indonesia became clear. AOA sensors started to disagree – the left sensor reached 74.5 degrees, while the right sensor indicated 15.3 degrees. This shows that Captain Yared Getachew was making left steep turning back to Bole International airport to get a fix for the flight control problem he reported to air controllers shortly after takeoff and due to this steep left turning the left AOA reached 74.5 degrees and hence MCAS was activated. ET-302 plane was traveling to Nairobi which is located South of Addis Ababa, but the plane had crashed in the North – East direction relative to its original path of travel. This shows that the plane was making a steep left turn to get back to Addis Ababa, Bole International Airport.

Similarly, Indonesian Lion air preliminary report indicated that the Digital Flight Data Recorder of flight JT610 showcased a difference of 20 degrees between the left and right Angel of Attack sensors. This shows that the captain was making a left/right steep turning back to the airport to get a fix for the flight control problem he reported to air controllers, shortly after takeoff, and due to this steep left/right turning the difference between left and right AOA sensors reading reached 20 degrees and hence MCAS was activated. On the preliminary accident investigation report of JT610 it is clearly indicated that, about a minute after taking off, the pilots reported to the terminal East traffic controller, asking for permission to some holding point, as they were having flight control problems.

Hence, before stopping the production of these planes, Boeing has to perform the steep turning condition on 737-MAX planes, and address this problem with a scientific approach. Stopping the production is a pessimistic way for solving this problem. Boeing is a big company armed with the required knowledge, skill, manpower, equipment and finance, and hence can solve this problem and make unforgettable history. GO BOEING!

You are making a huge mistake in using AOA to assess a turn.

AOA is not turn related other that some difference in a high side and low side AOA, but its a difference in what the AOA is down to much much much smaller variance.

Its not a turn indicator.

High angle (banks) turns become seriously relevant because it increase the G forces on an aircraft. Double the weight and your stall goes way up.

Shallow turns also increase it but by small enough amounts not normally to be an issue.

As commercial aircraft normally do no more than 30 deg bank, you know to keep your nose down enough to stay out of that stall areas, AOA or not.

Steep out of the norm turns could well lead to issues, I don’t know that Ethiopian actually was in a steep turn, I would have to see the data.

Due to how it happened, the most likely cause of AOA failure was a bird strike that jammed the AOA into a fixed and very high position (so high it should have been programed to register unreliable and not triggered any action)

Please check the steep out of the norm turns condition to ET302 and update me your findings. Do not forget that the captain and the first officer are under 30 years of age and they can make a hasty turn at takeoff speed when they face the column stick shaking.

I don’t get the point.

Aircraft are designed to make steep turns, but how is that an issue here?

Clearly MCAS triggered and they lost control.

Clearly they lost speed control (or track of it)

Clearly they did not follow the procedure to deal with a problem MCAS trigger.

Incompetent maintenance crew and incompetent flight crew.

What can go wrong?

Did you watch the hearings and hear the aircraft pilot vent about the obsession with the AoA warning light, saying it was inconsequential? Did you hear the CEO say that the handling characteristics of the Max were supposed to be the same as those of an NG? What would happen if an incompetent maintenance worker didn’t properly calibrate a replaced AoA vane in an NG?

AOA without known that MCAS would follow is irrelevant.

They arelayd had a number of other reports (speed disagree and altitude disagree) that were not relevant either.

That is the problems with computers. They can do so much and often its not relevant, but you have to do some major programing to sort that out.

So the mfgs just dump it all on the pilot and he is supposed to figure out what is important.

What they need is a kill button, shuts all that stuff up and you fly what the instruments are telling you.

“Did you hear the CEO say that the handling characteristics of the Max were supposed to be the same as those of an NG?”

Yeah…it’s pretty sad.

https://www.businessinsider.com/boeings-ceo-on-why-737-max-pilots-not-told-of-mcas-2019-4

I am puzzled by a test procedure (Florida) that while testing the AOA for good would result in a released AOA with a bad value at its zero potions?

I tested a lot of wiping resisters (shafts) and I always checked to be sure that 1/4 value was close to 1/4, 1/2 was half and 3/4 was 3/4 resister value.

Those had not true calibration marks, that was done once installed but I sure would have seen a significant error or more than 5 degrees.

Not sure if this interpretation is correct, but it sounded like the internal mechanism was left in the calibration position (20 degree offset) after the calibration, instead of being restored to the operational position (zero offset). Again I could be wrong. This should have been detected by verifying signal output after installation.

1.16.2 Observation to the Xtra Aerospace (from the report, pp89-91)

“The angles recorded for Resolver 1 and Resolver 2 resulted in a 25-degree bias over the full range of vane travel. The test demonstrated that an AOA sensor calibrated and tested with a Peak API in relative mode could result in an equal bias introduced into both resolvers. The bias would not be detected during either AOA sensor calibration or CMM Revision 8 return-to-service testing.”

“These test results suggest that there was a possibility of differences or a bias if the REL/ABS toggle switch was inadvertently selected to REL position.”

The miscalibration could have been caused by the REL/ABS switch on the testing unit in Florida. The REL position can be used during calibration in null-mode against a known reference position. We also know that the observed 20 degrees bias is a known calibration position.

We don’t know exactly what happened in Florida, only that the miscalibration occurred there, and the FAA has revoked their repair certification.

However from the Lion Air report:

“After the installation, the AMM required an installation test that can be performed with recommended method which requires test equipment of AOA test fixture SPL1917 or alternative method using the SMYD BITE module. The test fixture was not available in Denpasar therefore the engineer performed the installation test by alternative method.

The alternative method was performed by deflecting the AOA vane to fully up, center, and fully down while observing the indication on the SMYD computer for each position. The AOA values indicated on the SMYD computer during the test were not recorded even though BAT procedures required it, however the engineer in Denpasar stated that the test result was satisfactory.”

Also:

“The engineer in Denpasar provided to the investigation some photos of the SMYD unit during an installation test as evidence of a satisfactory installation test result. The investigation confirmed that the SMYD photos were not of accident aircraft and considered that the photos were not valid evidence.”

Finally, we know that the 20 degree bias was present on the ground as the aircraft was activated, immediately after the sensor replacement.

Another paragraph from the report:

“Following the accident, NTSB and Boeing performed AOA sensor installation test on an aircraft with an AOA sensor that deliberately misaligned by 33° bias before install. The installation test was performed by alternative method referred to in the AMM. The test result indicated that a misaligned AOA sensor would not pass the installation test as the AOA values shown on the SMYD computer were out of tolerance and “AOA SENSR INVALID” message appeared in the SMYD BITE module. This test verified that the alternate method of the installation test could identify a 21° bias in the AOA sensor.”

“Comparing the results of the installation test in Denpasar and Boeing, the investigation could not determine that the AOA sensor installation test conducted in Denpasar were successful.”

No photos, no SMYD recording…

Confirmed mis-calibration in Florida, mis-installation in Denpasar , mis-diagnosing in Jakarta, MCAS misfire mid air. Those are a lot of mis-odds together to bring down a plane.

VdG, Two AOA sensors in a row having faults and a possible bad test during installation, does make one want to check the serial number of the aircraft to see if it ends in ’13’ (13 being an unlucky number), or if a curse was put on the plane. Literally, what are the chances?

Literaly well, Lemme to the rescue:

“There have been 30 occurrences of AoA malfunction (erroneous high angle reading) over an 18 year period, across many Boeing models, without any significant incident, until the MAX MCAS malfunction was added.”

This translates to 1 chance every 7,2 months of AoA vane malfunction and probable subsequent MCAS misfire. Lion and Ethiopia accidents are separated 5 months.

Keep in mind, it was likely a bird strike on Ethiopian MAX and the pilots had some warning.

They still did not handle it correctly.

So, even with no trail longer than a bird strike and warning it was still not an issue all pilots could deal with.

The Ethiopian Captain looked to be fairly experienced, cockpit management by the co pilot who was not clearly was of no help.

So while it took many steps to Lion, it took one and MCAS with Ethiopian .

Clearly there is a significant pilot component as well as the lethality of MCAS 1.0

“They still did not handle it correctly. ”

Yet they followed the Runaway Stabilizer checklist as mandated by the Nov AD:

“After the autopilot disengaged, the DFDR recorded an automatic aircraft nose down (AND) trim command four times without pilot’s input. As a result, three motions of the stabilizer trim were recorded. The FDR data also indicated that the crew utilized the electric manual trim to counter the automatic AND input.

The crew performed runaway stabilizer checklist and put the stab trim cutout switch to cutout position and confirmed that the manual trim operation was not working.” (p25 – preliminary report on Ethiopian accident)

I think the significant pilot component in this case was, again, lack of adequate information regarding a critical intervening system (MCAS). The November AD left these pilots in a dead end.

To be fair, in the second crash excessive airspeed became an important factor, and was the cause of manual trim being difficult or impossible to use.

In other forums and the congressional hearings, people have claimed that the electric trim became unresponsive as well, which would have meant recovery was impossible. We will have to wait for the final report to see.

From what we know now, it would seem that distraction and unfamiliarity played a role in the second crash as well.

I agree that is a fair observation, but one which has an very plausible explanation: overspeed was a consequence of the aircraft not being in the intended climb pitch with the many AND inputs MCAS was sending, at the same time, power was probably what the pilots were clinging to in order not to lose altitude which would translate in potential additional time to sort out the situation they found themselves into.

If column input is not sufficient – elevator, if electric trim is not sufficient – stabilizer, if manual trim is not operational – stabilizer, I don’t think anyone would surrender their last control – power – as the last resort counter to the behaviour of the aircraft in that situation. Flaps would indeed work in favour, since it would disable MCAS, but in absence of this critical piece of information, it would be similar to reducing power, therefore out of the question in their minds. This is obvious speculative, but I think it is sound logic for them to have followed.

You are attempting to interject logic into this.

While I understand why Ethiopian could cascade, the fact is also that the pilots did not follow any of the proper procedures all the way through.

They let speed get totally out of hand.

There is a limit as to how high a speed you can be at and not be allowed to lower flaps (they can rip off and you can also go asymmetric with one and damage the rear of the aircraft (including those critical tail feathers)

In an emergency, you can’t focus on the crisis and ignore flying the aircraft.

They never counter trimmed long enough to pull the stabs out and just stop it.

They gave up and put the stabs back in.

So, yes there was some really bad piloting by people who had information on the issue and still did not handle it.

For a pilot this is a tough one, Bjrorn feels the pilots are not at fault but I struggle with that.

They certainly are not at fault for the initial problem, but it was flyable and survivable.

Their actions took an emergency into a crash.

TW:

I stand by the logic I presented, infact it is similar logic Boeing it self states underlies the development of the NNCs:

“- The settings are biased toward a higher airspeed as it is better to be at a high energy state than a low energy state” (MAX’s FCTM)

On the other hand, it does also state:

“These memorized setting are to allow time to stabilize the airplane, remain within the flight envelope without overspeed or stall, and then continue with reference to the checklist.”

Given that 50 seconds after MCAS triggers the first time PF decided for a target altitude of 14000 feet instead of continuing the climb he should have adjusted from the climb thrust to level flight thrust, unless he was still bellow… which in fact he was, 13,400ft, 140 seconds into his decision.

I maintain, in the PF’s mind, overspeed was not his immediate issue, anyways also overcome by a successful resolution of the problematic pitch. But of course, this is just plausible speculation, and it won’t be a final report to clear us on that department. One either agrees with its plausibility or not, I take it you don’t and it’s all fine.

“They never counter trimmed long enough to pull the stabs out and just stop it.”

This also intrigues me. From the final report:

“The main electric and autopilot stabilizer trim have two rates: high trim rate with flaps extended and low trim rate with flaps retracted.”

I sampled the rates of manual electric trim (initial trim position – final trim position/time in seconds), they were consistent with flap position.

I guess the Pilots in both flights were excessively cautious, they did not want to go over the 6.1 and 5.6 set for climb stabilizer positions, likely because of the stall warning, which they could have ignored for that matter.

Yes, that’s the usual aviation accident signature. Many thing go wrong in a chain that aligns to cause a disaster. It’s why we have to look at every link and try to break that element for the future.

Bjorn:

I don’t get why Speed and Altitude would care about AOA and disappear.

As far as I know they have no relationship.

Also of some note is the altitude and speed disagree on the final flight.

Both were off though not so much as to be a crisis other than a Cat 3 landing.

They were flashing their alerts into the rest of the mess. Add in the AH being set 13 degrees wrong on the First Officer side.

I continue to fail to see the relevance of AOA to an airline pilot. Fighter pilots who lie at the extreme edges yes, Airline Pilots?

@TW

yes they are ‘related’, even I, that is not an ATPL/CPL pilot know that the AOA signal is part of the speed and altitude calculations.

Instead of me explaining, just search the net for ‘correlation between AOA and IAS’, – you will find literature on the subject to keep you occupied the whole week-end. The correlation is indirectly mentioned in the FAA ADs publihed following the LA accident. The speed and altitude don’t disappear, they change value – compared to what the other pilot reads.

Svein:

As if I don’t have enough to do – well I can speed read though I would have appreciated a link.

I only got a Commercial license (instruments added as well) AOA was something I don’t even know if fighters had back then.

TW good basic Q&A on airspeed correction using AOA

https://www.airliners.net/forum/viewtopic.php?t=738067

Svein:

I have done some reading and AOA in and of itself tells me nothing.

You need speed, aircraft weight (G force) and even turn angle as well as altitude to have AOA mean anything.

I am seeing it as data that feeds in with other data to indicate that you are approaching stall, and that stall has to be mapped to that aircraft.

If you don’t fly at high AOA I see it as just more data on the display that takes up space and potentially distracting.

Now feed it all into the PFD with the bars and limits to where you don’t want to go, ok, but you should not be up there in those areas anyway.

TW, If you’re a Naval Aviator, always landing on a postage stamp, with varying weights, you’ll know what an AOA is.

You can stall at 100 mph, 200 mph, 300 mph, but, always at the same AOA.

=====

http://navyair.com/Angle%20of%20Attack%20Indicator.htm

RD:

I am a pilot, I have done all the stalls (and got myself into a spin)

That said, Airlines are not lading on carriers.

You fly an aircraft normally (Carrier Pilots are just plain nuts ) and you will have a feel for normal envelopes of stall.

note: I saw a film of the re-do of the spin series for a F4 Phantom as the service was having problems with recovery and htey wanted to nail down the correct control positions and timing.

One series they took it up to 110 degrees (yes 10 degree over vertical) stalled and spun it. It took them 20,000 feet to recover. At 15,000 they were told to bail out. Now that is also insane. Bless them.

But commercial aircraft don’t have ejection seats for the pilots, let alone the passengers and we arn’t talking doing 110 deg stall either

And as its a weight factor (G force) that allows you to stall at 300 mph (say a 85 degree 9 g turn) AOA means nothing unless its related to the rest.

The systems that detect air flow turbulence on your wing are better than AOA, that is relevant.

AOA is only relevant if it has other data to be of any real use and it needs to be displayed as a unified output, not a value unto itself.

And would you depend on a computer that if the AOA is bad the rest is as well?

What I am reading is not what Boeing has on the 737 (variations of this)

https://www.flyingmag.com/how-it-works-angle-attack-indicator/

Reserve lift indicator or AOA put through a computer with other data for stall.

Adding another item to the system is suspect. You could put it into the PFD with a bug that is the limit. Don’t go any higher than the V.

I continue to see see separate AOA as a distraction from where your attention is norm-lay which is the PFD.

Fewer distractions not more.

@TW,

try this AB reading:

https://www.skybrary.aero/bookshelf/books/2263.pdf

Search for IAS. It’s around page 24 – the whole book is worthwhile reading. It’s more than one air speed!

I have been working with control systems on large integrated oil platforms, there we can have avalanches of alarms (hundreds) when we have an upset. The good thing about these installations is that they have a clear, and easy to understand, fail-safe state; – closed-in and depressurized/emptied. Airliner systems fail-safe is harder to deal with.

What we have worked on is to find the root cause, the first-out sensor(s) that started the whole thing. With todays fast scanning computers it’s getting easier to ‘find’ it. Then, on the operator screens, highlight the root cause and dim the consequential ones.

The stick-shaker going (on one side) should indicate a problematic AOA (when the other pilot’s data is good – also in relation to the artificial horizon). Then it should be a memory item to ‘forget the speed and altitude on the ‘shaking side’. The pilot’s displays should be able to do the above masking of consequential alarms.

@ TW,

look up Indicated Airspeed on Wikipedia. Here you find what you need. In the meantime, I take the dogs for a walk

Svein:

I used to live breath and calculate that stuff.

How did I ever survive without AOA?

The debate about AoA display has raged for awhile now. Manufacturers say that it’s unnecessary and might contribute to information overload.

But several pilots have pointed out examples where it would have provided additional situational awareness, that would have been valuable. Sullenberger has said this in response to the flight 447 and other accidents. It would seem to have had diagnostic value in both MAX accidents. In Lion Air, the flight might not have proceeded at all.

I’m not a pilot, but it seems logical that if it’s readily available and is continuously being used for aircraft control, such that a malfunction causes several alarms, then it should be visible. This would enhance situational awareness in critical situations, even if pilots would not be consulting it in more normal situations.

If you are so mentally hosed as to pull back a control column (AF447) thinking its going to do (what?) if you have no airspeed, then an AOA indicator is like another little light coming on.

Sure if you feed it an other data into a PFD you can display limits that are useful, but another item to look at while an emergency is going on?

Walk into the cockpit of a A330 and you see 20 some degree up on the PFD and your VSI is negative -10,000? Uh guys, this puppy is stalled.

Sure having the stock market ticker is great to have so you can watch your investments go up and down, but does it do anything for flying the airplane?

As much as I respect Bjorn and Sullenberg, both have military background and fighters flying.

The average pilot just needs to know where to point the nose (or where not to)

I would put myself in that average pilot group, not a Sully and not a Bjorn (I have often disused with myself what I would have flown if in the Military and Attack aircraft not fighters seems to be my profile)

Call me a Skyraider or A-10 kind of guy.

TW,

Bjorn answered your question with the following statement from this very article.

In other words, AoA sensors aren’t really needed for the pilot to fly the aircraft, but are needed for accurate speed and altitude measurement.

@TW, here is another good link – this time at youtube, where you find all you need to be an excellent pilot – or learn everything that it is to know about flying. – enjoy-

https://www.youtube.com/watch?v=jpPvKZ-RLjw

It’s not about the theory of airspeed and altitude vs AoA, it’s the measurement system with pitot tubes and static ports which has faults coming from how the air flows over the aircraft fuselage. This is measured during flight test and a calibration table is created which as AoA as one table lookup parameter. If AoA is missing the speed and altitude are flagged as non-serviceable as the correction is not done.

@ Bjoern,

Air speed and altitude, as I once learned, was derived from static and dynamic pressure sensors outside, the outside sensors was directly connected to the instruments in cockpit via tubing. I guess small aircraft use the same today. Then at one time in history (1980s) things became more sofisticated, the the AOA was introduced in the calculations – via ‘boxes’ like the air data computer /ADU). This enabled, among other things, better low speed handling (approach). The came the Configuration Module, that in short, customize the ADU for the aircraft type it is used.

Hence, it is a coherence between the IAS and AOA, in the sense that IAS will depend on AOA, not much; but as it looks from the accident report, enough to initiate disagree alerts for speed and altitude – and would have for AOA if the function had been implemented. Five knots difference for five seconds should be enough to initiate the alert – if my memory is correct.

But my main, and only, question is: when the WOW switch defines the aircraft as flying at rotation, the erroneous left AOA ‘started to work’. It saw the aircraft about to stall, and the speed and altitude readings between the two sides were sufficiently apart to initiate alerts. At this point flaps were out, and MCAS still not active. And; on the FO side everything looked normal!

So, shouldn’t todays airliner pilots know so much about the above that they immediately should connect the alerts to (a possible) AOA failure; and see the alarms as ONE failure setting of a couple of others? Or is that to much asked?

I had wondered if maybe this was why the captain gave control to the first officer, since his instruments were normal? Also if maybe seeing normal values contributed to the first officer not recognizing the need to trim out/counteract MCAS, as the captain had done?

There is no conversation between the captain and first officer at this time, so we don’t know what their reasoning was, or their awareness.

Bjorn:

Can you spell that out?

Non serviceable, like you need to shift control to the other pilot or the values are not spot on?

I get the issue with all of it but at what point is it no serviceable and would they not have the AH is still a reference as well .

Svein:

We are getting into averge pilots vs realy above average pilots.

To find out we would need to trest the ragne of pilots from Eipain, Lion, Air Asia and all the Americn Airlines.

We know in AF447 3 Western pilots with a signiant number of flight hours did not reconzie a stall (all the classid pointers) let alone why the FO put the hose up when speeds droped out int he firts place (dumb as it would be at alitue at lest nose down is your raining on loss of speed aaka stall)

At issue is they never have really drilled down into why people (icnlding pilots) react the way they do in true out of state things (and speeds going away is not an emergency).

Some people put their foot on the accelerator of a car thinking its the brake. .

Then it never occurs to them to take it off as something has flipped and its the brake despite the fact the car is going faster and faster.

With todays care they can tell you it was the accelerator they had pressed and never let go but in prior times the drive would swear up and down they were on the brakes.

What I can proves is short of some cars with 350+ hp engines, you can’t overcome the brakes and you sure can’t accelerate like they did.

NTSB in its report on this has flat told them they have not a clue and need to go back to the drawing board and quit assuming and do scientists testing on people to find out what works and what does not.

Horns and alarms do not work and they have known that forever but over my career they have kept putting more and more variations of the same thing as backup alarms.

This at least has got some attention that is long been needed.

Frankly I feel a test is needed that throws something totally out of the blue. Some will panic and some will deal with it.

Those that panic probably can never be trained to reliably not panic when the unexpected its thrown at them.

We might not have enough pilots but that may be the reliability.

SveinSAN, The pilots may notice the difference, of one side issuing alerts and the other side not, so which do you trust? Is the one side not issuing alerts good or bad? You then have to try and reason it out, but, in the mean time, fly the aircraft as best you can (i.e. looking out the big pitch indicator in front of you (the window), if there is good visibility) and going to known engine settings and visual references. You’re supposed to trust your instruments, but, many a pilot has feathered the wrong engine during a failure on takeoff, making the situation worse. If you’re in the clouds and getting multiple warnings, you could get into an AF477 event, where you’re not sure which number to trust. It’s also difficult to mentally block out certain instruments that you’re used to relying on, even if you do know they are bad. Flying partial panel, it’s a good idea to actually physically block them out with tape, if you have time.

@RD

Richard, I would assume that the side with the stick-shaker going was at fault, and use the other side as being correct -no stick-shaker going. (I know the correlation between the AOA reading and speed/altitude indications, so it’s a bit selfexplanatory – but I guess that I would have concluded the same if I didn’t know this).

For the flights that had the AOA with the intermittent fault (with no stick-shaker going), I would have concluded likewise. However, here the alerts were not critical, and gave amble time to look up the manuals – here the captain’s readings switched between showing the same as the FO’s and being different. I would trust the stable one.

Perhaps comments from an arm-chair referee (with many years in control engineering)

Guys: There is a backup display for airspeed, altitude, as well as an Artificial Horizon.

However, the co-pilot had his PFD at 13 degrees mis set at take off.

Add any up angle to that and you are easily seeing stall angles (all the rest aside you get up into the 20+ degree area you are getting close to or are stalling)

There was a shift TO the co pilot then he gave it or took it back (forget which)

Again pilot issue and total lack of CRM.

TW:

“However, the co-pilot had his PFD at 13 degrees mis set at take off.”

From the MAX’s FCTM, cited in the final report:

“For optimum takeoff and initial climb performance, initiate a smooth continuous rotation at VR toward 15° of pitch attitude.”

“After liftoff, use the attitude indicator, or indications on the PFD or HUD (HUD equipped airplanes), as the primary pitch reference(…)

Note: The flight director pitch command is not used for rotation”

The 13 is the FO PFD value before rotation. Also, the -1 value of of the Captain’s PFD is supposed to be the one driving the Flight Director by this time. At 80 knots through Vr, this is all before any functioning (or malfunctioning) AoA vane signal is deemed reliable.

Consequently, I don’t think your remaining observations apply.

“Liftoff attitude is achieved in approximately 3 to 4 seconds depending on airplane weight and thrust setting.” (FCTM)

While the stall warning triggers the stick shaker and 4 seconds after rotation a 7º pitch is recorded, halfway to the procedural 15º.

This also denotes how a 20 degree difference in AoA is more misleading in terms of magnitude with regard to altitude than speed for this phase of flight. ie a 10-20* knots difference versus a 230 feet difference 40 seconds after rotation.

*gross estimate

RE all the discussion about AOA on commercial

from Boeing document on the issue

”

Operational use of Angle of Attack on commercial jet airplanes

( several years ago )

Angle of attack (AOA) is an aerodynamic parameter that is key to understanding the limits of airplane performance. Recent accidents and incidents have resulted in new flight crew training programs, which in turn have raised interest in AOA in commercial aviation. Awareness of AOA is vitally important as the airplane nears AEROstall. It is less useful to the flight crew in the normal operational range.

On most Boeing models currently in production, AOA information is presented in several ways: stick shaker, airspeed tape, and pitch limit indicator. Boeing has also developed a dedicated AOA indicator integral to the flight crew’s primary flight displays

last page

AOA is a long-standing subject that

is broadly known but one for which

the details are not broadly understood.

While AOA is a very useful and

important parameter in some instances,

it is not useful and is potentially

misleading in others.

The relationship between AOA

and airplane lift and performance

is complex, depending on many

factors, such as airplane configur-

ation, Mach number, thrust, and CG.

AOA information is most impor-

tant when approaching stall.

AOA is not accurate enough

to be used to optimize cruise

performance. Mach number is the

critical parameter.

AOA information currently is

displayed on Boeing flight decks.

The information is used to drive

the PLI and speed tape displays.

An independent AOA indicator is

being offered as an option for the

737, 767-400, and 777 airplanes.

The AOA indicator can be used to

assist with unreliable airspeed

indications as a result of blocked

pitot or static ports and may

provide additional situation and

configuration awareness to the

flight crew.

====

http://www.ata-divisions.org/S_TD/pdf/other/IntroducingtheB-787.pdf

Tom Dott ISASI Sept 2011 ” introducing the 787 ”

http://www.boeing.com/commercial/aeromagazine/aero_12/aoa.pdf

Sveins link says AOA is particularity helpful at low air speeds.

My take is that (and all apologies to Bjorn) AOA was brought into the mix as a result of military pilots dominating the commercial sector for a long time.

My take is its a comfort thing. Military pilots are used to it, but commercial operations have no use for it and its another source of conu9in

Note the above says MAY.

I would rank myself in the average non military pilot group that sees no use for it.

As noted, its a course device, clearly in the case of two crashes it was tied in such that all it did was cause people to die.

I would like to see one example of it having saved lives?

In the 70`s & 80`s I worked at IBM as a service tech on test equipment at a mainframe manufacturing plant. NDF (no defect found) and TWA (trouble went away) were common comments frequently found in log books.

No lives were at stake with our diagnostics and repairs, there would just be another service call.

I like Boeing, but they screwed up.

IBM could take a person off the street and train them to service their main frames (not the job that I had (they took us off the street to)) that were used in most fortune 500 companies.

But if something went wrong, they worked to find out WHERE THEY WENT WRONG IN THEIR TRAINING.

They didn’t lay the blame on the technician (I could be wrong here)

Aviation is much different from the IBM environment that I remember. Boeing does not control the technical environment (the airline customers) as IBM did it’s technicians.

But barring gross negligence, a mistake by an aviation tech should not cost lives.

The nature of commercial aviation is that there is rarely a single factor of causation in accidents, because the emphasis on safety in the regulatory environment is so strong. Aircraft are not usually brought down by a single failure. That in turn is because the consequences of an aircraft accident are so highly likely to involve loss of life.

This means regulations are designed to break the causation chain at every potential link, to the extent that is possible. So every component is certified, the aircraft, the airline, the pilots, the maintenance staff, the repair shop. And if anyone makes a mistake that contributes to a crash, that will be investigated in hopes of preventing a similar occurrence in the future.

So that explains why technicians are held accountable, as are pilots, and manufacturer, and airline. No one is really exempt from scrutiny. But it should also be that we look at the whole chain, as well as individual links, and not try to single out any one element, either for blame or absolution. It’s really about fixing the complete chain.

Most of the controversy and debate surrounding these crashes revolves around people identifying an element they find particularly appalling, and focusing on that. But it’s the complete chain that matters most.

A simple way to put that is multiple layers of safety.

My boilers all had a over temp safety, trigger it and you had to manual reset to get back on line. They were also tested to the boiler operating temp, you turned the OTS down until it tripped and cross checked to see it was reasonably close to the display temp. If it was more than 5 deg out (these are not precision devices) then you replaced the OTH or the temp display or the operating thermostat .

Much like an aircraft you could use two out of 3 as a good indicator as to which was bad.

Maconda failed because they hosed up every safety system on the well (something like 7). Some were stupid (ignoring what the instruments were telling them rather than believe the instruments ). Others were insane (not even testing the blowout presenter after repair)

Add insult to injury the gauge they ignored did not have a backup.

Me? Swap gauges. Them? Oh the gauge has to be wrong.

Other stupidity was they never tested the blowout preventer at the depths they were using it nor did they check if the batteries were good in both side of the it (two sets)

Once you are past the last layer of safety, things go boom or crash and sadly people die.

Confirmation bias plays a major role. You believe testing isn’t needed so you don’t test, but by not testing, you don’t learn whether testing is needed or not.

It’s a subjective rather than objective approach. Objectively, you would confirm your initial view by testing, so you know you are on firm ground.

I suspect this was a factor for MCAS development as well. Boeing viewed it as a helper system, that would automatically assist the pilot if the aircraft got into a high pitch state, but was otherwise benign, and unlikely to ever be needed or activated in practice.

This view then was used to justify decisions that consistently supported it:

1. Lower impact classification that did not require redundancy or full fault tree development (reduced testing and analysis).

2. No need to provide information to airlines/pilots because MCAS behavior was incorporated into simulator (later found that MCAS was not correctly represented in simulator, and also simulator time was not mandatory, so argument only applied to new pilots).

3. If unforeseen MCAS malfunction occurred, behavior would be similar enough to runaway trim that pilots would recognize and use memory items (found that pilots did not respond as expected, although in fairness pilot training & experience are also factors).

4. Expansion of authority from high-speed to low-speed case did not warrant a thorough review (again reduced testing and analysis).

Boeing did not really deviate from this view until after the second crash. Then the testing and analysis finally took place, which removed the confirmation bias.

Rob,

Good comments regarding confirmation bias. I think this is how Boeing convinced themselves that what they were implementing was good enough.

We’ve all heard and discussed Boeing’s outdated assumption that a pilot could respond to to an MCAS malfunction in 3 seconds. A subtler part of that assumption was Boeing thought the pilot would always use manual trim to counter any MCAS action, apparently even if MCAS acted when it was supposed to. This led to Boeing convincing themselves that repetitive MCAS actions were not as hazardous as they really were.

Thanks Mike. Confirmation bias plays a role in most major disasters. In the space shuttle Columbia disaster, NASA continued to believe shedding foam insulation could not pierce the wing, until the cannon test blew a huge hole in it. Then there was utter shock that such a basic assumption, which had been in place for 20 years, was false.

Svein:

I worked with building automation systems (as well as sort conveyor systems though I only was the victim of the sort programing, I did not direct any of it)

The outside controls contractors that came in for updates would remark that my system was too clean, no alarms.

I was very selective about what was a key item, sometimes the alarms were more alert to an event I wanted to know occurred even if no longer current (data logged)

My systems included a Simulator Building, you could get a whole screen of alarms when the source was a single issue (that one was dicey as those singles were also critical as any one of them would stop the sim ops)

No life safety involved (phew) but the operation had one item that was paramount above only an inured employee (and frankly injured employee or knot, as soon as they were clear of the conveyor the operation started again)

Sort Delay: Thou Shalt NOT have a sort delay. That was priority 1 . Simulator was 1A. If someone was handling 1, then I handled 1A.

We wound up with a contractor on a new boiler system who wired his control in paralleled to the OEM controls. Normaly you would set the OEM to max heat and the CC would reset below that.

At issue was a bad system where the CC sensor was in a combined header from two boilers not one from each.

When One boiler failed (frequently enough with a flame fail sensing issue) and it would drive the other boiler into High Temp Shutdown.

One of the others Thou Shalts (right at the top) was, you don’t use a safety as an operating control which is what was being done in effect.

People may think building controls are not a safety issue. I have seen the pictures of boilers blown up. One was a 50 gallon hot water heater that blew out an entire corner classroom in Oklahoma. Killed 20 or 3 kids.

So yea, working with hot water boilers is a lesson in controls and safety unto itself and that did not include Carbon Monoxide clearing systems.

With the controls, pilots license and working around that equipment, I got an interesting and pretty broad education.

Our last line of defsne was routinely being used.

Can I ask for comment how a 3 month old aircraft would need to have a part replaced, such as a AoA vane. If this is normal, then I am shocked. The theory is a bird strike that damaged or sheared it off. Given the critically of the sensor and the number of bird strikes, surely a more durable design is called for? As an engineer, even a simple front angle with rake will at least deflect a bird (just one example of proactive design).

In the first crash, the sensor was mis-calibrated, it had a 20 degree error. In the second crash, a bird strike is suspected.

Normally the loss of one sensor is not a major issue because there are 2 or 3 for redundancy. In the case of MCAS, it wasn’t programmed to make use of redundant sensors, so the failure of one was enough to activate it.

I think deflection may not work well at high airspeeds. The collision force and impulse are very high even if the bird’s mass is low. Also you want the sensor in the free air stream, without anything in front of it.

In the first crash, the sensor was mis-calibrated, it had a 20 degree error. In the second crash, a bird strike is suspected to have broken the sensor.

Normally the loss of one sensor is not a major issue, as there are 2 or 3 sensors for redundancy. In the case of MCAS, it was not programmed to make use of redundant sensors, so the loss of one as enough to activate it.

Deflection may not work well because of the high airspeeds. The collision impact would have high force and impulse, even if the bird’s mass is low. Also you want to have the sensor in the free air stream, with nothing in front of it.

As I recall reading some of the inspection-test report on AOA, there was at least one case wher despite good checks- a major temp change to below freezing would screw up the output- o course when checked at room temp no problem. **apparently** the temp change effect was caused by’ gluing” a coil wire between to parts of different temperature coeficients (sp ? ) or witha change in temp they would move in different directions, causing an eventual break in coil wire or similar.

Whete that was a discovered problem with one of the vendor or airline provided sensors was not clear- or I missed it

The AOA that was on Lion in the flights prior to the crash had an intermittent fault. It was replaced by a mis-calibarted replacement sensor.

It will come as a shock, it has fancy terms live resolver (or some such) but what you have is a metal contact that slides along bare wires that have a much higher than normal resistance.

https://www.electronics-tutorials.ws/resistor/potentiometer.html

While you can seal some of those types, if you have a shaft type with a vane hanging off the end, as well as multiples on the shaft, there is no way to do it other than open.

So yes, regardless of the quality of the product, you are stuck with failures in them. They have a moving part and there is no way to make it truly no failure. You hedge it by sealing the AOA shaft and having those sensitive parts inside the hull.

From the description I don’t believe its inside the pressure hull and subject -60 to 150 degree temp swings.

Its about as crude a system as exists in the world.

As an asise, some of the AOA accumulator type units use a precision nozzle with air sensing over them to pick up AOA (basically they are looking at disrupted air not an angle, disturbed air is what causes a stall not the angle which has to be interpreted to air speed, altitude and turn rate (or a sudden pull up).

The speed sensors are tubes that push against an element in a line. Also about as archaic as it gets. The entire Pitot system is sensitive to over pressure (someone putting an air nozzle into it will blow it out) – freeze over and bubs and crud getting into it.

Aviation has come a long ways in some regards but in others its back in the 30s (or earlier)

Hmmm – Stearman used a pivoted plate and pointer against a spring fastened on a wing strut for airspeed- maybe it was also an AOA indicator. So a major advancement was to enclose it and provide somew wires to display it on a tv tube ??

Orville used a wing warp- but we are not there yet.

The resolver does NOT use a potentiometer – it is a contactless sensor and they seem to be regarded as quite reliable.

https://en.wikipedia.org/wiki/Resolver_(electrical)

Ever consider ramp rash due to change in height of nose by almost a foot ?

And the passenger loading platform on the left side ?

All data says the AOA was never calibrated.

If they had data to say anyone had, then yes a ramp hit would be a possibility.

As it never was proven right then suspect 99.999999999% is lack of calibration.

Layman:

As to AOA resist to damage. Per the regs, they ignored real world damage and assess only on failures (per the resolver). Yes its insane.

And no, you can’t design an AOA that will survive a bird strike.

Do the math on force for a 3 lb bird and 300 Knots. Its really really high. Foam weight nothing wiped out the space shuttle.

That is why you have two, they are not vital to flight (or were not until MCAS 1.0 reared its ugly head)

FBW as it needs to vote, uses 3.

You actually have a few other options but they have not been pursued as primary equipment (787 has a backup speed system based on inertial I believe)

I would guess you could use a laser ring gyro for some pretty accurate stuff and you could feed the AH (artificial horizon) into the system.

How good the commercial ones are I do not know. We set them on the ground and were good for the flight.

The gyro compass was not and we cross checked that to the real compass when stable. I would have to dig into my stuff to see what the difference was.

The various instruments I flew had backup power sources. Most was electrical but we had vacuum as well in cases electrical went away.

” You actually have a few other options but they have not been pursued as primary equipment (787 has a backup speed system based on inertial I believe)”

re 787 synthetic airspeed

https://www.ata-divisions.org/S_TD/pdf/other/IntroducingtheB-787.pdf

see pages 39 to 42 approx

Atkins undertook a study of bird strikes that caused damage to aircraft from 1990-2007. I have only had a quick read, but from what I understand there is no documented event where a bird strike damaged an AOA.

If a bird strike was a factor in the Ethiopian crash, this could give us a greater understanding of why Boeing rates the MCAS flight control hazards the way they did.

On a trivia note, approximately 1in every 300 air accident fatalities are a consequence of bird strike.

The Nasa ASRS database shows 6 incidents spanning 15 years involving aoa and bird strikes:

– May 2019 – Boeing 737 NG

“We then taxied to a remote pad and were met by fire and rescue. An exterior inspection revealed that the FO (First Officer) side AOA (Angle Of Attack) vane had been broken completely off, with the FO pitot tube taking a direct hit and clogged as well.”

– May 2017 – A319

“Post flight inspection revealed a bird strike which completely clogged the standby pitot tube. I believe that the First Officer AOA system was sending bad or missing information to the aircraft. Multiple failures of redundant systems.”

– June 2016 – PC-12

“My suspicion is perhaps a bird hit the AOA probe and then continued on to hit the leading edge of the wing- but this is just speculation.”

– December 2012 – B737-300

“Had stick shaker due to AOA vane being sheared off.”

– November 2012 – B757-200

“Severe bird-strikes, probably 2 or 3 Canadian Snow Geese at 15,000 feet and 330 knots. Severe damage to radome, nose, right engine, possible AOA system, Pitot-Static system, and possible radar damage. ”

– April 2003 – Beechjet 400

“WE HIT 3 BIRDS, ONE ON EACH WINDSHIELD, AND ONE HIT THE AOA PROBE.”(sic)

It is held ASRS database constitutes only a reduced sample of reality.

Regarding the likelihood of birds striking such a small surface, we must keep in mind that many times it is a flock of birds, not a single one. Also the fuselage reduces the domain of uncertainty from 3D space to a 2D surface. In practice it happens more often than intuition leads us to think.

Teavle:

I would certainly like that link.

One of the aspects mentioned safety wise is that FAA allows only the non external failures of an AOA for assessment.

It does not include a ramp strike or a bird strike.

Ramp strikes are a constant issue and bird strikes occur and the article I read (I did not save it) mentioned a number of them.

It may have to do with reporting not require though it certainly should be for all causes and ALL failures go into the data base and not cherry picked.

Japanese cherry picked the Fukushima reactor risk data to quake and Tsunami though clearly about (from memory) 150 years before there was and equal one that hit the same area. The Japanese have excellent records going back several thousand years.

To not use all the data is pure cherry picking for economics not safety sake. Boeing can exceed FAA if it so wanted to.

from the AV Herald database.. bird strikes and AOA.

Birds can and do take out AOA sensors, but, usually don’t

cause the aircraft to crash.

==============

https://www.avherald.com/h?article=49931cb1&opt=0

https://www.avherald.com/h?article=4af0a1c4&opt=0

https://www.avherald.com/h?article=4a41775e&opt=0

https://www.avherald.com/h?article=49931cb1&opt=0

https://www.avherald.com/h?article=44935e0f&opt=0

https://www.avherald.com/h?article=417acb53&opt=0

Only once.

Blocked pitot tube itself can bring confusion to the pilots challenge for solving with stick shaker blarring and airspeed warning, and instrument were falsely display the measurements. https://lessonslearned.faa.gov/ll_main.cfm?TabID=2&LLID=76&LLTypeID=2

Now even more difficult, the false Aoa inputted MCAS kills silently with AND, doubled speed trimming intermittently hideous from pilot, even bypassimg column aft.