Leeham News and Analysis

There's more to real news than a news release.

Engine Development. Part 7. Engine reliability changes the aircraft market

Subscription Required

By Bjorn Fehrm

Introduction

September 29, 2022, © Leeham News: The 1970s saw the introduction of the High Bypass engine for the medium/long range Boeing 747, Douglas DC-10, and Lockheed Tristar, with Airbus A300 employing an updated variant of the DC-10 engine for medium range missions.

In the following decades, these engines introduced improved technology and matured into new levels of reliability. With the increase in reliability came changes in how long-range aircraft were designed.

Summary

- The engine development after the introduction of the high bypass turbofans in the 1970s focused on reliability and higher efficiency rather than new design principles.

- The change in reliability made the two-engined long-range aircraft the winner over three and four-engine aircraft.

The High Bypass Turbofans mature in Technology and Reliability

With the introduction of the new high bypass turbofans in the thrust class 40,000lbf, the reliability of the jet engine made regulators and airlines want a least three engines for long overwater flights.

Such caution was motivated; neither of the engines introduced for the new widebodies, the JT9D-7 for the 747, the CF6-6 for the DC-10, and the RB211-20 for the Tristar L-1011, were free from troubles. It was new technology with different problems affecting the engines.

The design of the engines with a big fan giving a bypass ratio of around 5:1 and a powerful core with a pressure ratio of about 25:1 gave the engine OEMs plenty of space to improve all aspects of the engines.

Instead of new engines being developed for new aircraft programs, the existing engines were further developed to cover thrust needs from 35,000lbf to 60,000lbf. The increase of thrust by 50%, up from the original 40,000lbf, was achieved by improved technology for several parts of the engines.

The design of the widebody engines between 1970 to 2000

After the rapid changes in engine designs from the 1950s to 1970s, where the commercial engine went from piston props to turboprops, straight jets, and bypass turbofans, the 30 years from 1970 to 2000 saw few architectural changes in the engines. A “typical” design standard developed.

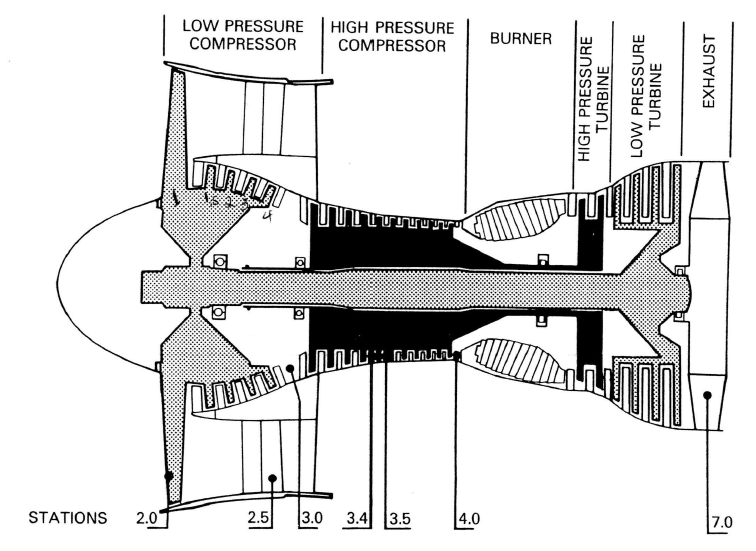

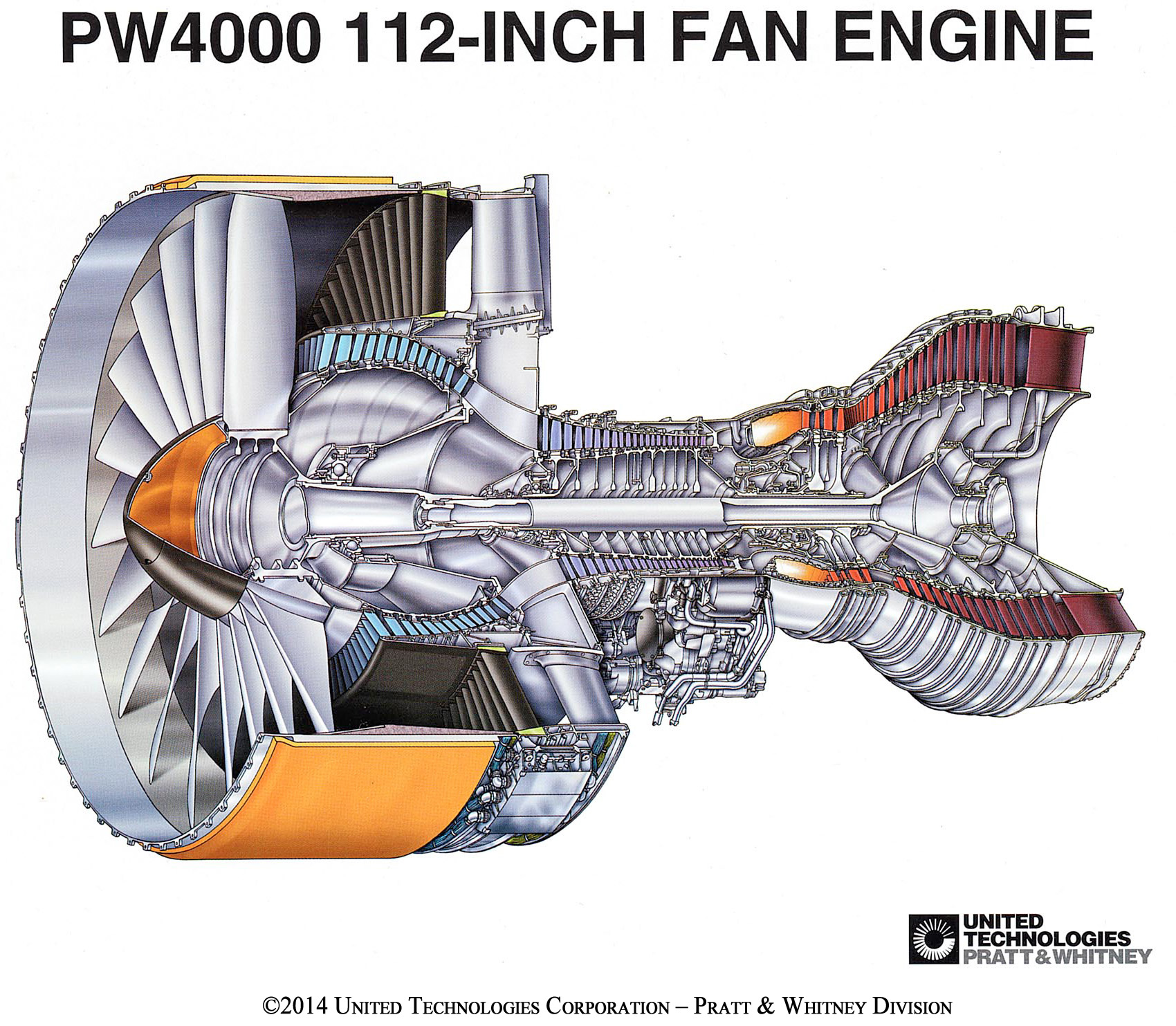

Figure 2 shows the cross-section of the Pratt & Whitney JT9D-7, the first variant that went onto the 747-100 with a takeoff thrust of 46,300lbf.

But it could also show the variant developed for the Boeing 777 25 years later as the PW4074, a 74,500lbf engine that used the same architecture, now with detailed improvements in areas like fan and compressor efficiencies, higher burner temperatures feeding more durable turbines, etc.

With minor changes such as the number of booster stages (the core compressor stages sitting on the same drum and shaft as the fan) or compressor/turbine stages, it could also represent the GE CF6 engine. Even the narrowbody engines we described last week, the CFM56 and V2500, used this architecture of a single fan, followed by three of four booster stages, a 10 to 12-stage high-pressure compressor, burner, one or two-stage high-pressure turbine, and three or four stage low-pressure turbine.

There was only one exception, the Rolls-Royce three-spool RB211s, later called Trents. Rolls-Royce had issue with the booster in a two-shaft engine rotating at the same low speed as the fan. A fan/compressor blade generates more pressure gain the faster it moves relative to the airflow through the engine. So a blade shall either sit on a fast rotating shaft or a large diameter compressor drum to be effective.

By separating the fan from the much lower radius compressor stages, each compression part of the engine could run at its optimal RPM (Figure 3) and deliver maximum performance.

The result was an engine where each compression stage delivered more compression, making for a shorter engine. The RB211s/Trents are known for their low rate of in-service degradation, which is a result of a shorter and, therefore, stiffer engine.

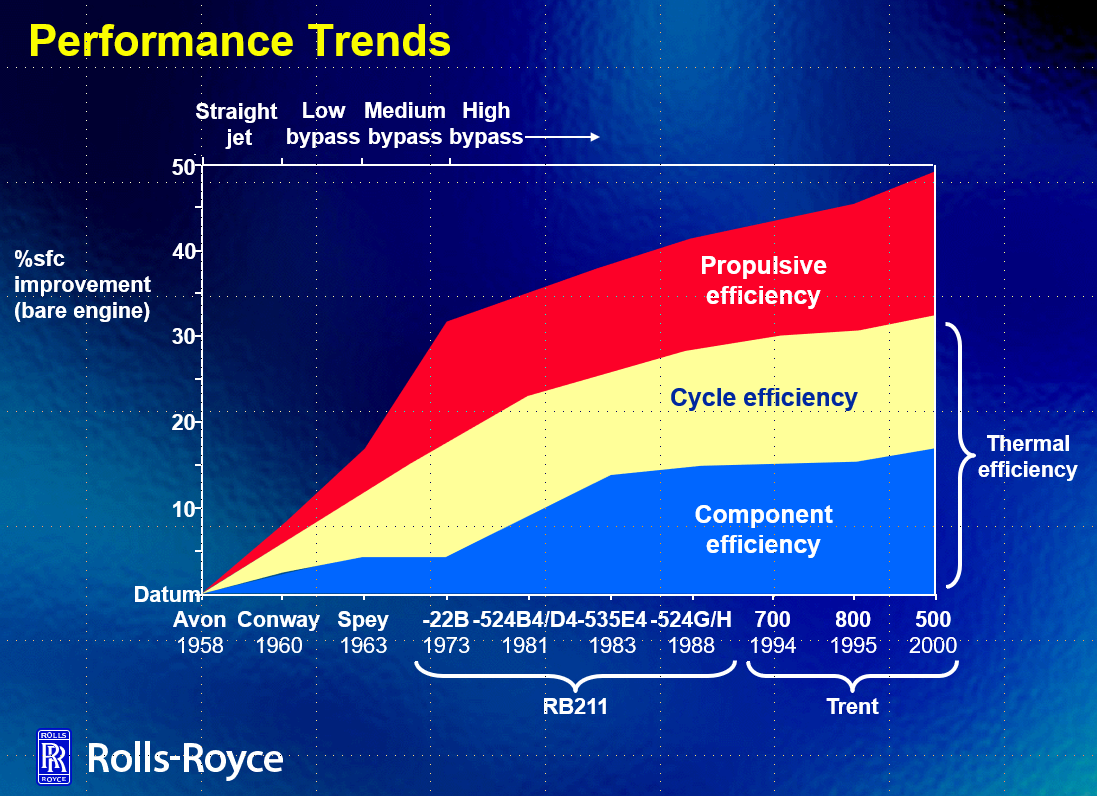

The architectural fuel consumption advantage of the tri-shaft was, in practice, overshadowed by continuous improvements on each section of turbofans. In the following, we use graphs from a Rolls-Royce presentation to give examples of such improvements. All three OEMs made similar steps in technology; we just use the Rolls-Royce graphics for convenience.

The areas of improvement

Figure 4 shows the principal areas of jet engine improvement over the years. It’s specific for Rolls-Royce engines but is valid for all OEMs.

The Propulsive efficiency improvement is the lowering of the Overspeed of the air leaving the engine combined with an increase in mass flow we have discussed before. The mechanism to achieve the lower Overspeed is the increase in Bypass Ratio, BPR.

The increase in BPR is dependent on how large fans the engines can have without running into trouble with blade instability and high mass for the fan and the containment casing, the fan case. This resulted in a compromise BPR of around five for all engines from 1970 until the mid-1990s.

A major step in fan efficiency came with the transit from long, narrow titanium blades to wide hollow titanium blades during the 1980s, Figure 5.

The long narrow blades could run into a mechanical/aerodynamic instability called flutter, which would destroy the blade. It, therefore, had mid-span clappers that increased the rigidity of the blade. This disturbed the airflow and, by it, efficiency.

As fan blades have no tip flow (tip flow sealed by the fan casing, different from wings, which must be wide and narrow to avoid excessive tip flow losses), the blades could be made wider and, therefore, mechanically stiffer, which increases the flow efficiency of the fan.

At the end of the period, GE took a major step with a new engine design for the Boeing 777-200, the GE90-74. Based on propfan research in the early 1990s, GE changed the material in the fan blades from Titanium to CFRP (Carbon Fiber Reinforced Polymer), whereby it could produce a 123-inch wide fan with BPR 8.4. The competing PW4076 had a 110-inch fan, as had the Rolls-Royce Trent 875, giving BPRs of 6.4 and 6.2.

CFRP is very strong in tension, which is the dominant problem for fan blades, but it’s brittle (as Rolls-Royce learned with the RB211-20 Hyfil CFRP fan). By adding a titanium leading edge together with optimized CFRP layup, the GE90 bird ingestion problem could be mastered, Figure 6.

Figure 6. The first CFRP fan blades for the GE90-72 to 94, followed by swept blades for the GE90-110 and 115. Source: GE.

In a later development for the 115klbf GE-90-115B, the CFRP blades were aggressively swept to increase the aerodynamic efficiency of the part supersonic (outer part) and part subsonic flow (inner part) of the fan blades.

Cycle and Component Efficiency

The cycle efficiency increase in Figure 4 came from burning the fuel at a higher pressure and temperature, closer to the ideal stoichiometric limit of jet fuel. This was achieved more by component efficiency increases than by changes in architecture. The architectures from 1970 to well past 2000 remained the same, with minor changes such as one compressor stage more or less dependent on engine cycle and history.

The improvements for the fans were not only by them generating higher bypass ratios. Their capability to generate a suitable Overspeed to more bypass air with a given power taken from the low-pressure turbine improved as well (the power transfer efficiency).

The engine pressure ratios were increased by higher compressor stage gains when the complex 3D aerodynamics of the stator vanes and compressor blades could be simulated with CFD (Computer Fluid Dynamics).

The higher burner temperatures were made possible by improvements in materials and cooling of the combustor nozzle and first turbine stages. Part came through advanced cooling concepts (Figure 7), part through new materials and coatings.

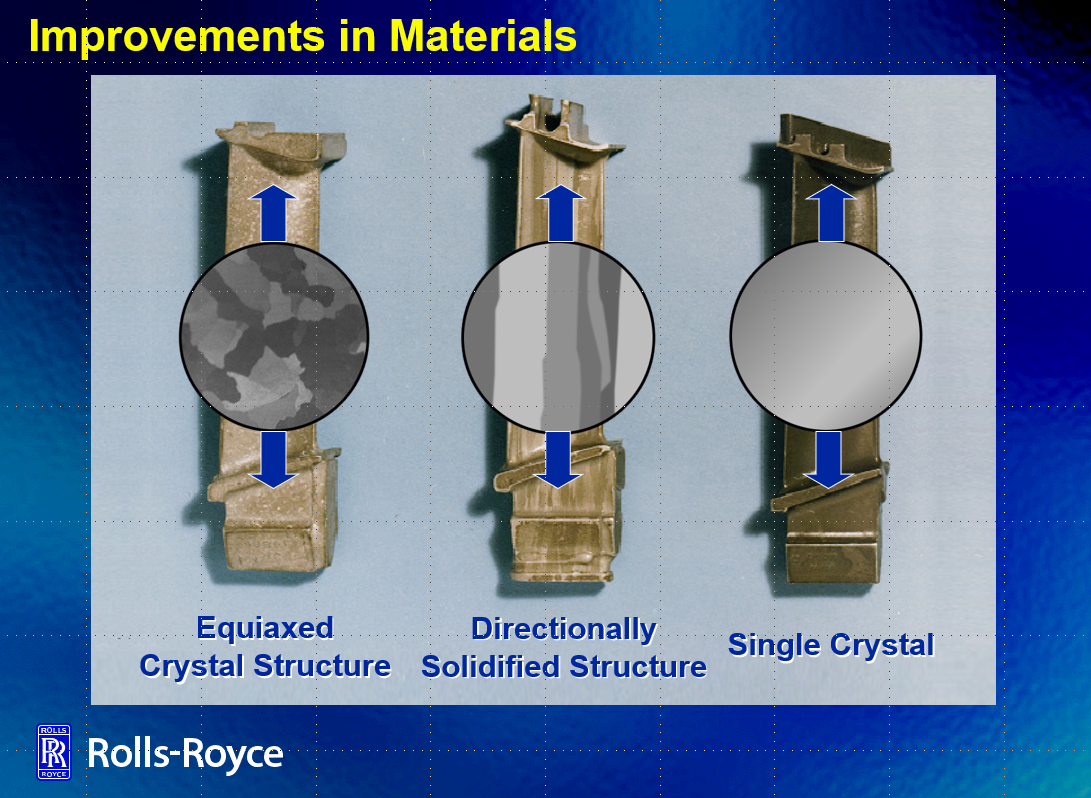

The turbine materials had been different Nickel based alloys for a long time, but by changing the crystal structure of the material (Figure 8), the stress tolerance could be increased, enabling higher turbine temperatures.

With a higher pressure ratio and temperature, the energy of the air from the combustor improved. The core could drive a larger fan through the low-pressure turbine, Figure 9.

The core efficiency increased from around 35% for the early high bypass engine to 50% for the engines in the late 1990s.

The aircraft programs and their engines

From the 1970s to the year 2000, the aircraft OEMs introduced seven new aircraft programs that were served by the engines developed for the 747, DC-10, and L-1011. Two new engines were added where the originals were either on the large side (Pratt & Whitney with its JT9D for the 747) or on the small side (GE with its CF6 for the DC-10), but these followed the architecture of the previous engines.

Boeing

Boeing introduced the 767 and 757 a decade after the 747. Pratt & Whitney powered the 767 with the JT9D-7 and renamed JT9D variants, PW4052 and 4060 (where the last digits give the thrust class in 1,000lbf). GE delivered the CF6-80 in thrusts from 48,000lbf up to 63,500lbf for the long-range 767-300ER and 767-400.

Rolls-Royce managed to capture a large part of the 757 market with a cropped fan RB211, the RB211-535. By now, the RB211 core was reliable, and in the lower thrust version of 37,000lbf to 40,000lbf, the RB211-535 became the most reliable turbofan of its era. Engines stayed on wing for up to 50,000 flight hours.

The JT9D designs from Pratt & Whitney were made for larger aircraft and could not be successfully downsized to the 757. Pratt & Whitney developed a smaller engine with the latest technology to capture the 757 market, that potentially could dominate future domestic routes. The JT10D, later PW2037, was born. It had a pressure ratio of 30:1 and BPR of 5.8. But the new design wasn’t as reliable as Rolls-Royce’s RB211-535. It didn’t capture the major market share, even though it consumed about 10% less fuel than the RB211. When the engine matured, Rolls-Royce had taken the lion’s share of the market, at the same time as sales of the 757 dwindled.

Boeing introduced the 777 in 1995, powered by PW4077 engines. All three OEMs were present on the 777-200 and 200ER, Pratt & Whitney with different PW4000, Rolls-Royce with Trent 800, and GE with the new GE90. The GE90, as a new design, suffered reliability problems and was not certified for the larger 777-300. GE redeveloped the GE90 into the larger GE90-115, where it gained exclusivity on the long-range 777-300ER and ultra-long-range 777-200LR.

Airbus

Airbus introduced the A300 with GE CF6-50 51,000lbf engines in 1974 for Air France. SAS introduced a JT9D-59 engined version in 1980. The CF-6 and JT9D/PW4000 were retained for the shorter A310, introduced in 1983 by Swissair.

The CFM56-5C we described last week was chosen for the initial four-engine A340 version, the -200 and -300. When Airbus stretched the A340 to compete with the 777, Rolls-Royce won exclusivity with an RB211 variant, the Trent 500.

Airbus developed a two-engined version of the A340 as the A330 by 1994. It had engines available from all three OEMs, the PW4064 to 4068 for Pratt & Whitney, the CF6-80E from GE, and the Trent 700 from Rolls-Royce.

McDonnell-Douglas

The DC10 was developed into the MD-11. Finnair introduced it in December 1990 with PW4460 engines. The CF6-80C was also sold with the MD-11.

Engine reliability changes the long-range market

When Boeing designed the 747, Douglas the DC-10, and Lockheed the L-1011, more than two engines were needed to get clearance to fly long routes over water. The increased reliability of the engines in the described period changes this.

By 1990 a twin-engined aircraft could get the necessary ETOPS clearances to fly far from possible airports. The 777, with its two engines, came to dominate long-haul from the end of the 1990s at the expense of Airbus’ long-range A340, which was designed with four engines (“as this was the engine number required for safe long-haul flight”).

Two engines have been the norm for long-range aircraft since (with the exception of the Airbus A380, which due to sheer size, needed four engines).

From the seventies, big twins took over.

– 1974: A300

– 1982: 767-200

– 1983: A310

– 1993: A330-300

– 1995: 777-200

– 2011: 787-8

– 2015: A350-900

what will be next..

That period had the A340 , the A380 and of course 2 new versions of the 747 the 400 and 800

Minor nitpick: Finnair had the GE engine on their MD-11s, not PW.

Mr Fehrm, some pictures can’t load because they are behind the paywall I guess . Very very interesting article, thank you for sharing.

I can imagine that when aircraft / engine development over time switches to alternative fuels, speeds, hybrid solutions to reduce costs and meet environmental restrictions, w’ll see 3-6 engined designs again.

Because thrust & reliability of those new design engines needs decades to mature to meet e.g. ETOPS 240 requirements, like we saw with turbofans.

The A340 was also set to have 4 of the then revolutionary IAE V2500SF SuperFans.